Several people have responded to my previous ARMv4T patch for the "Donut" branch of Android, and have provided excellent feedback.

I recently checked out the code again, and thought that it would be wise to do some rigorous testing of all native binaries on the Android filesystem.

Please find an updated (but still incomplete) patch for ARMv4T here.

I've thrown together a small script to disassemble and check binaries for invalid opcodes. You can find the output here.

The output shows which executables and shared libraries still have non-ARMv4T machine code, and currently only 103 of 160 components pass the test. Therefore, there is still quite a bit of work to be done. For the remaining 57 components that fail, the illegal (non-ARMv4T) opcodes are also revealed.

NB: Just in case you find diffs generated with 'repo diff' just as annoying as I do, you can use this utility to automate the patching process.

update-20091215: The build system is doing "ok", but its still not perfect. Independent of compile-time issues are, of course run-time issues. The most major issue is the subject of dynamic code generation and you can read some of my thoughts here. To address the issue, I will be working with the android-on-freerunner people. For any further discussion about this topic, I suggest you join the android-on-freerunner mailing list.

20091209

Google Chrome Beta on Gentoo Linux

I had already tried the Google's Chrome browser (the developer edition was called Chromium) and thought it was great, aside from a few minor bugs. For example, sometimes drop-down lists would not have any entries, the menu for the flash plugin would not respond, etc. It looks like yesterday, Google released a beta version for Linux that fixed several of the minor bugs that I noticed.

I modified an ebuild to download an install Chrome from the debian package. In any event, you can put it in your own portage overlay under www-client/google-chrome-bin/, and it should do the trick. You might have to add 'www-client/google-chrome-bin' to your /etc/portage/package.keywords file. To install Chrome, just run PORTDIR_OVERLAY="/path/to/my/portage/overlay" emerge -av google-chrome-bin.

Overall, the Chrome experience is quite good. It's very fast, and I haven't encountered any errors or bugs so far. Most pages render perfectly. You might also want to install FlashBlock.

I modified an ebuild to download an install Chrome from the debian package. In any event, you can put it in your own portage overlay under www-client/google-chrome-bin/, and it should do the trick. You might have to add 'www-client/google-chrome-bin' to your /etc/portage/package.keywords file. To install Chrome, just run PORTDIR_OVERLAY="/path/to/my/portage/overlay" emerge -av google-chrome-bin.

Overall, the Chrome experience is quite good. It's very fast, and I haven't encountered any errors or bugs so far. Most pages render perfectly. You might also want to install FlashBlock.

20091205

Dalvik VM Internals

To anyone who is interested in Android, I would highly suggest that you check out this video if you haven't already, called "Dalvik VM Internals".

The video was probably published a long time ago already, but it shows just how much work went into the Dalvik VM by Dan Bornstein and others who worked on the project with him. Particularly, it really explains why it's necessary to think how code will be executed on a machine, even when writing in a high-level language such as Java, which is something C++ gurus have also been emphasizing for years.

Luckily, I do most of my work in C ;-) but I do periodically try to rewrite chunks of software in lower-level languages to mitigate certain overhead. That would involve, for example, converting pure Java or C++ image processing code to C or ASM. The topics covered in this Video do a good job at explaining ways to implement the features of higher-level languages essentially in machine code.

Very good presentation.

The video was probably published a long time ago already, but it shows just how much work went into the Dalvik VM by Dan Bornstein and others who worked on the project with him. Particularly, it really explains why it's necessary to think how code will be executed on a machine, even when writing in a high-level language such as Java, which is something C++ gurus have also been emphasizing for years.

Luckily, I do most of my work in C ;-) but I do periodically try to rewrite chunks of software in lower-level languages to mitigate certain overhead. That would involve, for example, converting pure Java or C++ image processing code to C or ASM. The topics covered in this Video do a good job at explaining ways to implement the features of higher-level languages essentially in machine code.

Very good presentation.

20091128

Alternative Browsers for Linux

All I can say right now is wow. Having been a long time user of Firefox, it's been a while since I last used a different browser under Linux... well... because there really weren't any better alternatives for a long time.

All I can say right now is wow. Having been a long time user of Firefox, it's been a while since I last used a different browser under Linux... well... because there really weren't any better alternatives for a long time.However, since webkit-gtk has been stabilizing lately, I decided to check out a couple of alternative browsers that were mentioned in the Linux Browser Shootout - namely Midori and Epiphany. I already tried Chrome (Chromium) a while ago and was quite impressed. What I really liked about Chrome was a) that it was blazingly fast, and b) that it did a great job of importing my Firefox preferences and bookmarks. There are still a few other issues with Chrome though. For example, some Flash functionality does not work properly. I'm sure that will be sorted out eventually.

So anyway, on to the good stuff. Most people are familiar with Epiphany (formerly Galeon) when it used the Gecko rendering engine (from the Mozilla project). Epiphany is the official browser of the Gnome desktop, if I'm not mistaken. Back when it was based on Gecko, it was good but just seemed like a less featured version of Mozilla or Firefox. Ever since they changed the default rendering engine to WebKit, it has been blazingly fast. Epiphany still suffers from having too few preference settings for the 'advanced' user. Also, when in full screen mode (which I tend to use frequently while browsing on my EEE 701) there is an annoying button at the top-right that says 'Leave Fullscreen', and it doesn't go away (until you leave fullscreen), which becomes bothersome when trying to actually view / click something that is underneath the button. Epiphany accomplished its goal of providing a very simple and easy-to-use browser for the Gnome desktop, but it really lacks appeal for advanced users.

Next, I tried Midori - and was really impressed. Not only was it fast (using the webkit-gtk engine), but it also had a few more settings that Epiphany was missing. Furthermore, it didn't have an annoying 'Leave Fullscreen' button to get in the way of things. There are a couple of issues I've found with it still, such as random cursor placement in a text area after pressing End / Left / Right / Down, etc, but I'm sure those will get ironed out eventually too.

I'm actually writing this entry using Midori, and have to say that it's very responsive and feels extremely light-weight. There is practically zero load time when going through GMail or Slashdot. Considering the Midori project has had less time to reach maturity than either Firefox or Epiphany, and has had less manpower, I would have to give it the best review overall.

Now, I am a huge fan of Firefox - Mozilla and its contributions to the Linux community have been fantastic over the years - but I have to admit that the webkit-gtk engine will probably take the GNU/Linux Desktop Browser crown once mature whether that is in Chromium, Midori or (with little likelihood) Epiphany.

20091108

Simple Fortran Cross-Compiler for ARM

Run Fortran code on my handheld ? Are you insane?

That's probably the first thing that comes to your mind after seeing the title of my latest post - but with the power of ARM processors of today, why wouldn't we run Fortran programs on them?

Fortran was (back in the 70's ... and arguably still is) the scientific computing language of choice for many academics and engineers. It became the basis for many of the most advanced matrix-algebra math libraries available to date, including BLAS, LINPACK, and LAPACK, which are used by the most powerful number-crunching machines in the world, not to mention all of the banks and stock-exchanges! What I find more interesting is that the popular math engine known as MATLAB makes heavy use of BLAS and LAPACK, as does a free MATLAB-like environment called Octave.

Fortran was (back in the 70's ... and arguably still is) the scientific computing language of choice for many academics and engineers. It became the basis for many of the most advanced matrix-algebra math libraries available to date, including BLAS, LINPACK, and LAPACK, which are used by the most powerful number-crunching machines in the world, not to mention all of the banks and stock-exchanges! What I find more interesting is that the popular math engine known as MATLAB makes heavy use of BLAS and LAPACK, as does a free MATLAB-like environment called Octave.

I should take a step back and say, that the simple cross-compiler I have devised is not in-itself a cross compiler, but a two-step solution using F2C and your friendly-neighborhood cross-GCC ;-) That is, the Fortran code is first translated to C code, and then cross-compiled into machine code. Although many people swear that a well written Fortran code pushed through a good Fortran compiler will always blow away C code (and they probably are right), it's always true that something is better than nothing. The advantage of using F2C is that you don't need to link your programs to libgfortran, and having fewer dependencies is always good in embedded systems.

I should take a step back and say, that the simple cross-compiler I have devised is not in-itself a cross compiler, but a two-step solution using F2C and your friendly-neighborhood cross-GCC ;-) That is, the Fortran code is first translated to C code, and then cross-compiled into machine code. Although many people swear that a well written Fortran code pushed through a good Fortran compiler will always blow away C code (and they probably are right), it's always true that something is better than nothing. The advantage of using F2C is that you don't need to link your programs to libgfortran, and having fewer dependencies is always good in embedded systems.

In any event, F2C (and the resultant libf2c) were authored by several friendly and very smart people who were kind enough to make their software available to the general public on NetLib.Org.

Using a popular perl script called Fort77, and my tiny patch, you should be able to cross-compile Fortran code to object code (.f -> .o) just like you would with C (.c -> .o). You'll need a libf2c.so compiled for ARM as well as f2c.h installed and in your cross-compiler's default search paths. I built the cross-compiled libf2c using an armv5tel-softfloat toolchain using Gentoo's crossdev, and created a small overlay for the package, available here.

I have something special on my evil agenda for this... and will hopefully be announcing something about that within the not-to-distant future!

Lastly ... for all of the gadget-geeks that are reading this... what sort of devices are you hoping to find in your stockings this holiday season? I'm fairly infatuated with the Qualcomm Snapdragon / MSM devices, but the Nokia n900 (with an OMAP chip also looks very tempting).

That's probably the first thing that comes to your mind after seeing the title of my latest post - but with the power of ARM processors of today, why wouldn't we run Fortran programs on them?

Fortran was (back in the 70's ... and arguably still is) the scientific computing language of choice for many academics and engineers. It became the basis for many of the most advanced matrix-algebra math libraries available to date, including BLAS, LINPACK, and LAPACK, which are used by the most powerful number-crunching machines in the world, not to mention all of the banks and stock-exchanges! What I find more interesting is that the popular math engine known as MATLAB makes heavy use of BLAS and LAPACK, as does a free MATLAB-like environment called Octave.

Fortran was (back in the 70's ... and arguably still is) the scientific computing language of choice for many academics and engineers. It became the basis for many of the most advanced matrix-algebra math libraries available to date, including BLAS, LINPACK, and LAPACK, which are used by the most powerful number-crunching machines in the world, not to mention all of the banks and stock-exchanges! What I find more interesting is that the popular math engine known as MATLAB makes heavy use of BLAS and LAPACK, as does a free MATLAB-like environment called Octave. I should take a step back and say, that the simple cross-compiler I have devised is not in-itself a cross compiler, but a two-step solution using F2C and your friendly-neighborhood cross-GCC ;-) That is, the Fortran code is first translated to C code, and then cross-compiled into machine code. Although many people swear that a well written Fortran code pushed through a good Fortran compiler will always blow away C code (and they probably are right), it's always true that something is better than nothing. The advantage of using F2C is that you don't need to link your programs to libgfortran, and having fewer dependencies is always good in embedded systems.

I should take a step back and say, that the simple cross-compiler I have devised is not in-itself a cross compiler, but a two-step solution using F2C and your friendly-neighborhood cross-GCC ;-) That is, the Fortran code is first translated to C code, and then cross-compiled into machine code. Although many people swear that a well written Fortran code pushed through a good Fortran compiler will always blow away C code (and they probably are right), it's always true that something is better than nothing. The advantage of using F2C is that you don't need to link your programs to libgfortran, and having fewer dependencies is always good in embedded systems.In any event, F2C (and the resultant libf2c) were authored by several friendly and very smart people who were kind enough to make their software available to the general public on NetLib.Org.

Using a popular perl script called Fort77, and my tiny patch, you should be able to cross-compile Fortran code to object code (.f -> .o) just like you would with C (.c -> .o). You'll need a libf2c.so compiled for ARM as well as f2c.h installed and in your cross-compiler's default search paths. I built the cross-compiled libf2c using an armv5tel-softfloat toolchain using Gentoo's crossdev, and created a small overlay for the package, available here.

I have something special on my evil agenda for this... and will hopefully be announcing something about that within the not-to-distant future!

Lastly ... for all of the gadget-geeks that are reading this... what sort of devices are you hoping to find in your stockings this holiday season? I'm fairly infatuated with the Qualcomm Snapdragon / MSM devices, but the Nokia n900 (with an OMAP chip also looks very tempting).

20091008

Stmpe2401 Linux Driver

I just thought that I would post a message about one of my recent submissions to the Linux kernel.

The STMPE2401 is a multi-function device from ST Microelectronics intended for mobile, low-power applications. It provides an I²C interface for up to 24 extra GPIO lines, each with independent interrupt generation capabilities. Up to 20 of those lines can be used with the integrated 8x12 matrix keypad controller. Another 3 pins can be used for programmable PWM channels, and 3 other pins can be used for a rotator input. All of the subsystems can operate together, at the same time, and each subsystem also provides additional interrupt capabilities.

This device appears on the Peek handheld, among many others. I wrote this driver for another device which I am still unable to mention (sorry about that).

There's actually a newer chip, called the STMPE2403, I believe, which makes a couple of improvements over the original. Namely, it has an automatic sleep function that ensures the lowest power consumption possible, but it's still capable of running in 2401 compatibility mode.

In the linux kernel, this should eventually show up under drivers/mfd, with sub-components showing up in drivers/gpio/chip, drivers/input, and so on. Currently, I've only implemented the keypad functionality but have provided lots of structure for the other subsystems. Here is the patch.

Enjoy!

The STMPE2401 is a multi-function device from ST Microelectronics intended for mobile, low-power applications. It provides an I²C interface for up to 24 extra GPIO lines, each with independent interrupt generation capabilities. Up to 20 of those lines can be used with the integrated 8x12 matrix keypad controller. Another 3 pins can be used for programmable PWM channels, and 3 other pins can be used for a rotator input. All of the subsystems can operate together, at the same time, and each subsystem also provides additional interrupt capabilities.

This device appears on the Peek handheld, among many others. I wrote this driver for another device which I am still unable to mention (sorry about that).

There's actually a newer chip, called the STMPE2403, I believe, which makes a couple of improvements over the original. Namely, it has an automatic sleep function that ensures the lowest power consumption possible, but it's still capable of running in 2401 compatibility mode.

In the linux kernel, this should eventually show up under drivers/mfd, with sub-components showing up in drivers/gpio/chip, drivers/input, and so on. Currently, I've only implemented the keypad functionality but have provided lots of structure for the other subsystems. Here is the patch.

Enjoy!

Labels:

i2c,

kernel,

linux,

linux-input,

patch,

st microelectronics

20090930

Motorola Q9h

So I wrote Motorola recently to see if they would like to cooperate in my effort to port Linux and Android to the Q9h, and although a couple of engineers on the motodev site seemed enthusiastic, I still haven't heard any kind of definitive reply.

So I wrote Motorola recently to see if they would like to cooperate in my effort to port Linux and Android to the Q9h, and although a couple of engineers on the motodev site seemed enthusiastic, I still haven't heard any kind of definitive reply.The next logical step was to disassemble a broken Motorola Q9h I found on eBay :) I'm not entirely finished this tear-down yet, because I'm still trying to pry-off a few remaining EMI shields, but its coming along smoothly.

I'd have to say, that I'm amazed with all of the components that went into this device from various vendors. Of course, there's the TI OMAP2420, a TI power management chip, some Samsung memory, Elpida memory, a Broadcom (I'm guessing bluetooth) transceiver, several FreeScale parts that I haven't yet identified, the SiRF 5000 AGPS receiver, a few random sensors (ambient light, ...), etc.

I'll be specifically looking for a 'companion chip' sort of like the TWL4030, to see what I can get out of the USB pins. I believe that D+ / D- are not fully muxed with UART2, but they are with GPIO 107 / 108, which means I might have to write a small soft-uart driver for the Linux kernel.

Most of the Linux-OMAP code for the 2420 device is already present upstream in the kernel (mainly written by Nokia engineers), so in terms of the Linux porting effort, it's really just a matter of configuration. There may be a couple of non-standard peripheral devices, but I'm not expecting any major difficulties.

I hope you eagerly await some photos of the internals :) I'll have to take a pause in order to get back to the library and resume studying for my wireless communication exam, but I should be done the teardown in another day or two.

Wish me luck!

Update-20091002: Teardown

Update-20091002: OpenEZX Page

20090921

20090919

20090915

Gentoo BootSplash for ARM FrameBuffers

With Gentoo now running on several mobile devices, including the OpenMoko devices, HTC Wizard, and one that I'm still unable to mention due to NDA, I thought that it would be advantageous to be able to package splashutils for ARM devices in order to make the Gentoo boot process as visually appealing as others (notably Angstrom).

One major blocker in that process was being able to (cross-)compile klibc for ARM devices. Although that has been working well for a couple of years already, klibc was unavailable for Gentoo / ARM without an arm keyword. So I had to edit the klibc ebuild considerably and make it cross-compile friendly. In the process I added a couple of useful features and bug-fixes that were already in OpenEmbedded, like wc, losetup, etc. You can see the efforts of my klibc ebuild at Gentoo's Bugzilla under bug #284957. It's currently working without any issues. Incidentally, the ebuild configures for the ARM EABI if it's applicable.

Once that was ready to go, I contacted Spock and he assured me that his splashutils would work for generic framebuffers, and not just vesa or vga style devices. Then, it was just a matter of emerge-ing splashutils, and creating a theme.

I chose to modify the natural_gentoo theme because it was relatively clean-looking and recent. There are definitely hundreds of themes to choose from though. Here are the modified version for resolutions 240x320 (silent), 240x320 (verbose), 320x240 (silent), and 320x240 (verbose). My 240x320.cfg file is available here and a feature request has been added at Gentoo's Bugzilla under bug #285088.

Note: Ned Ludd and Angelo from #gentoo-embedded had apparently done this a year ago, but I guess they hadn't submitted it to the main portage tree. I was unaware of their efforts unfortunately, and maybe could have spared myself some time if I would have known about it beforehand.

One major blocker in that process was being able to (cross-)compile klibc for ARM devices. Although that has been working well for a couple of years already, klibc was unavailable for Gentoo / ARM without an arm keyword. So I had to edit the klibc ebuild considerably and make it cross-compile friendly. In the process I added a couple of useful features and bug-fixes that were already in OpenEmbedded, like wc, losetup, etc. You can see the efforts of my klibc ebuild at Gentoo's Bugzilla under bug #284957. It's currently working without any issues. Incidentally, the ebuild configures for the ARM EABI if it's applicable.

Once that was ready to go, I contacted Spock and he assured me that his splashutils would work for generic framebuffers, and not just vesa or vga style devices. Then, it was just a matter of emerge-ing splashutils, and creating a theme.

I chose to modify the natural_gentoo theme because it was relatively clean-looking and recent. There are definitely hundreds of themes to choose from though. Here are the modified version for resolutions 240x320 (silent), 240x320 (verbose), 320x240 (silent), and 320x240 (verbose). My 240x320.cfg file is available here and a feature request has been added at Gentoo's Bugzilla under bug #285088.

Note: Ned Ludd and Angelo from #gentoo-embedded had apparently done this a year ago, but I guess they hadn't submitted it to the main portage tree. I was unaware of their efforts unfortunately, and maybe could have spared myself some time if I would have known about it beforehand.

20090821

Android ARMv4T Donut Patch

On my most recent flight from Canada to Germany, and in spite of having a great conversation with a single-serving friend, I got a bit bored and decided to try and build Donut for ARMv4T, which is quite unstable at the moment. The single-serving friend, whose name I never learned, was in a very similar boat as me - he said he was in the middle of an MSc in biomedical engineering in Lyon, and was originally from Kentucky. It's nice to actually have a decent conversation on an overseas flight for a change.

On my most recent flight from Canada to Germany, and in spite of having a great conversation with a single-serving friend, I got a bit bored and decided to try and build Donut for ARMv4T, which is quite unstable at the moment. The single-serving friend, whose name I never learned, was in a very similar boat as me - he said he was in the middle of an MSc in biomedical engineering in Lyon, and was originally from Kentucky. It's nice to actually have a decent conversation on an overseas flight for a change.Anyway - back to the topic at hand.

The reason I'm working on the Donut branch of Android, and patching it for ARMv4T, is that I would eventually like to use it on my OpenMoko FreeRunner, which currently uses Android-Cupcake from KoolU / Michael Trimarchi. Although the generic Donut branch now builds successfully with my patch, I still need to migrate all of the hardware-specific FreeRunner changes that KoolU (any many others) made.

If you feel interested in starting from the upstream end and working downstream, as opposed to starting from KoolU git and working upstream, then check out my patch (update: see bottom of post for updated patches) and give me some feedback. I'm not inclined to submit it upstream yet, without having tested out an an actual device, so it's in very early stages right now, but it definitely builds.

You'll need to create a buildspecs.mk file, like the one below, and put it in the base of your android build directory:

TARGET_SIMULATOR := false

TARGET_BUILD_TYPE := release

TARGET_ARCH_VERSION := armv4t

Happy hacking!

update-20090824:

update-20090825:

- While working on the above patch, I discovered that a lot of upstream work has been done on Android under the hood to support ARMv4T. That includes v4T assembly optimization for opencore, dalvik, etc. Bottom line - this is good for the FreeRunner! However, there are still a few places which need improvement though, and equivalent v4 asm. I filled in some of the gaps (external/opencore/codecs_v2/video/m4v_h263/enc/src/fastquant_inline.h) last night, but the rest are mainly all of the files that think CLZ is an V4 ARM instruction (sheesh!). They were easy enough to convert to C-equivalent code.

- Recently Michael Trimarchi also announced some work was underway to integrate the libGlamo code into Android's GL. Not too shabby! Perhaps we might see an accelerated Android on the OpenMoko FreeRunner in the near future!

- I recently put some thought to the Glamo's limited 511x511 buffer and limit of 7Mb/s to memory. Sure, it sucks, that we can't do everything at 640x480 (as advertised), but aren't there other ways to get around it? Such as performing acceleration on one quadrant of the FB at a time? Or switching to/from a lower or higher resolution when playing video / games ? We essentially have to beg for, borrow, cheat & steal those accelerated pixels if acceleration is to work.

update-20091214: Please see my newer post for a continuation of the effort.

Labels:

android,

armv4t,

FreeRunner

Location:

Kiel, Germany

20090711

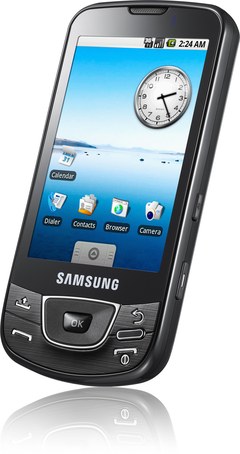

HTC Hero vs Samsung Galaxy

I've been wowing over these two devices approximately for the last couple of months, and decided that it's finally time to make a decision.

Both the Samsung Galaxy and the HTC Hero use the same SoC (who isn't?) - the Qualcomm MSM7200A, a 528 MHz ARM1136EJ-S core, using the ARMv6 ISA. The MSM7200 includes a 3D acceleration unit and a Qualcomm DSP, making it very suitable for any multimedia application. I do believe that the 3D acceleration unit still lacks full support under Linux/Android (much like the OMAP3530/PowerVR). Both handsets have a 5MP camera, although no secondary camera for video calling. They both have 802.11b/g, bluetooth, etc. The display on both devices is 320x480 pixels. Major differences are that the Galaxy has several more buttons (e.g. volume control), much more internal storage space, and a very power efficient AMOLED display, while the Hero sports the new Sense(R) UI, integrated Adobe Flash, more RAM, and multi-touch support.

I thought I would summarize. For those who would like to see a detailed comparison of the two devices, check out this link from pdadb.net .

| HTC Hero | Samsung Galaxy | |

|---|---|---|

| RAM (MB) | 288 | 128 |

| Flash (MB) | 512 | 7630 |

| Connector | ext-USB (mini-USB compatible) | micro-USB |

| Battery (mAh) | 1350 | 1500 |

| Display Depth | 16-bit (65-thousand colours) | 24-bit (16-million colours) |

| Display Type | Transflective TFT (standard) | AMOLED (very low power) |

| Multi-Touch? | YES! | NO! |

| Price (€) | 485,97 (Amazon.de) | 476,98 (Amazon.de) |

So far, I have not had a chance to examine either of these devices in any detail, but from what I have read, the Hero does support multi-touch. Until now, most sources have stated that the Galaxy does not support multi-touch. However, that might only be due to the fact that Android did not support multi-touch in software until the Hero came out. Perhaps the Galaxy has hardware support for multi-touch. Who knows with any certainty? I've read that the Galaxy actually has a capacitive touchscreen, so multi-touch does not seem that far off. The Galaxy also lacks Google-branding, which (aside from lacking some cover-art) means that software updates are not transferred over the air. Rather, updates must be performed manually with a USB cable, which some users might actually prefer.

Let's start with the Hero. I like that it has a large amount of RAM a great UI that supports multi-touch. The RAM will come in handy for streaming media (i.e. video) and fast context switching (Android's OOM process killer will not be called as frequently). As for multi-touch, I think you would probably agree, that there really is no alternative for pinch-zooming in the browser, or easy photo rotations. Now, the Galaxy - the fact that it's missing some RAM has been somewhat accounted for with the built-in 8GB of storage. As far as the screen goes, AMOLED spells very low-power (I love it) and still supports a true-colour (16million level) display. That will make it excellent for viewing any type of video or looking at photos. However, if the Samsung hardware truly does not support multi-touch, then I would be quite disappointed.

I have to admit, that I'm still sitting on the fence about this until I know with any certainty that the Samsung does not support multi-touch. Originally, I was thinking that the Samsung would be the best choice, without a doubt, but now I'm really considering how the extra RAM in the Hero might be beneficial. In terms of permanent storage, the Hero has more than enough, and is expandable with a micro-SD card anyway. On the other hand, the Galaxy could easily host a few operating systems on-board, or perhaps my entire collection of (decent) mp3s. This decision would definitely be easier if I knew for a fact that the Galaxy hardware did or did not support multi-touch.

Labels:

android,

arm,

htc hero,

HTC Wizard,

linux,

samsung,

samsung galaxy

20090708

Suggestions for Future OpenMoko Device

I love my FreeRunner as much as the next guy. The truth is, though, that there are some serious improvements that could be made to the OpenMoko hardware - some purely for usability, and some to fix a couple of plaguing, nasty bugs. The suggestions below would really turn something like the FreeRunner into a truly portable computing device - not just another iPhone clone.

I love my FreeRunner as much as the next guy. The truth is, though, that there are some serious improvements that could be made to the OpenMoko hardware - some purely for usability, and some to fix a couple of plaguing, nasty bugs. The suggestions below would really turn something like the FreeRunner into a truly portable computing device - not just another iPhone clone.I've collected a few of my own suggestions below

- use capacitive instead of resistive touch-screen (for multi-touch gestures)

- switch to a newer generation SoC, such as the Qualcomm MSM7200A

- newer ARM ISA (e.g. enhanced, jazelle, dsp, etc)

- integrated video codec (ditch the Glamo)

- integrated 3D graphics (again, ditch the Glamo)

- integrated GPS receiver - just needs internal antenna

- get rid of external GPS antenna connector (use internal instead)

- add dedicated power connector (e.g. Nokia 6620)

- built-in circuitry for 'software-free' battery charging (see discharged battery bug)

- support charging from 1st USB if dedicated power supply is absent (1st port should be USB OTG)

- add 2nd USB for use as dedicated host port

- add a mini-dvi video output

- add an ambient light sensor (auto backlight control in software)

- a 5MP photo / video camera and 1.3MP camera for video chat.

Comments?

20090627

Hello, Native Android World!

I just thought I would post my experiences with creating an Android application that uses JNI to call native code.

First of all, create a regular Android project in Eclipse, following the Hello Android tutorial, except

Make a subdirectory in your Android project, called for example 'C', and then copy the resulting com_christopherfriedt_android_HelloNativeAndroid.h (created with javah), to the 'C' folder.

Next, create the following files:

Then run 'make', and 'make install'. Build your Android project, and it will now have libHelloNativeAndroid.so bundled in the .apk file, inside the 'assets' directory (you can easily view the contents of it with unzip -l, because it's just a zip file).

Then install the .apk file using the android debug bridge (adb), with 'adb install HelloNativeAndroid.apk'.

Open the application up on your mobile, or in the emulator, and you should see something resembling the screenshot below.

Some notes:

Acknowlegements:

First of all, create a regular Android project in Eclipse, following the Hello Android tutorial, except

- change the project name to HelloNativeAndroid

- change the class name to be HelloNativeAndroid

- change the package name to be com.christopherfriedt.android, or something similar

HelloNativeAndroid.java:Open up a shell in the 'src' directory of your project and run

package com.christopherfriedt.android;

import java.io.*;

import java.util.zip.*;

import android.app.Activity;

import android.os.Bundle;

import android.util.Log;

import android.widget.TextView;

public class HelloNativeAndroid extends Activity {

private native String greet();

/** Called when the activity is first created. */

@Override

public void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

TextView tv = new TextView(this);

tv.setText( greet() );

setContentView(tv);

}

static {

String pkg = "com.christopherfriedt.android";

String cls = "HelloNativeAndroid";

String lib = "libHelloNativeAndroid.so";

String apkLocation = "/data/app/" + pkg + ".apk";

String libLocation = "/sdcard/" + lib;

File f = new File(libLocation);

if ( ! f.canRead() ) {

Log.d( cls, "'" + libLocation + "' not found, proceeding to installation" );

try {

ZipFile zip = new ZipFile(apkLocation);

Log.d( cls, "found ZipFile " + apkLocation );

ZipEntry zipen = zip.getEntry("assets/" + lib );

if ( zipen == null ) {

Log.d( cls, "unable to find ZipEntry '" + "assets/" + lib + "'" );

} else {

Log.d( cls, "found ZipEntry '" + "assets/" + lib + "'" );

}

InputStream is = zip.getInputStream(zipen);

Log.d( cls, "opened InputStream" );

OutputStream os = new FileOutputStream(libLocation);

Log.d( cls, "opened OutputStream " + libLocation );

byte[] buf = new byte[4096];

int n;

while ( (n = is.read(buf)) > 0 ) {

Log.d( cls, "read " + n + " bytes from InputStream" );

os.write(buf,0,n);

Log.d( cls, "wrote " + n + " bytes to OutputStream" );

}

os.close();

Log.d( cls, "closed OutputStream" );

is.close();

Log.d( cls, "closed InputStream" );

} catch( Exception e ) {

Log.e( cls, "failed to install native library: " + e );

}

}

try {

Log.d( cls, "attempting to load " + lib );

System.load( libLocation );

//System.loadLibrary( cls );

Log.d( cls, "loaded " + lib );

} catch(Exception e) {

Log.e( cls, "failed to load native library: " + e );

}

}

}

javac com.christopherfriedt.android.HelloNativeAndroid.java

javah com.christopherfriedt.android.HelloNativeAndroid

Make a subdirectory in your Android project, called for example 'C', and then copy the resulting com_christopherfriedt_android_HelloNativeAndroid.h (created with javah), to the 'C' folder.

Next, create the following files:

HelloAndroid.c:#include "com_christopherfriedt_android_HelloNativeAndroid.h"

#define GREETING "Hello, Native Android!"

JNIEXPORT jstring JNICALL Java_com_christopherfriedt_android_HelloNativeAndroid_greet

(JNIEnv *e, jobject o) {

return (*e)->NewStringUTF(e,GREETING);

}

Makefile:

CROSS = arm-softfloat-linux-gnueabi- CC = $(CROSS)gcc STRIP = $(CROSS)strip JDK_HOME = /opt/sun-jdk-1.6.0.14 PKG = com_christopherfriedt_android CLASS = HelloNativeAndroid DEBUG = CFLAGS = -O2 -pipe -Wall -march=armv4t -mtune=arm920t \ -I$(JDK_HOME)/include -I$(JDK_HOME)/include/linux LDFLAGS = -fPIC -shared -nostdlib -Wl,-rpath,/system/lib ifneq ($(DEBUG),) CFLAGS += -g endif SOBJ = lib$(CLASS).so all: $(SOBJ) $(SOBJ): $(PKG)_$(CLASS).h $(CLASS).c $(CC) $(CFLAGS) $(LDFLAGS) -o $(SOBJ) $(CLASS).c ifeq ($(DEBUG),) $(STRIP) --strip-unneeded $(SOBJ) endif clean: rm -f $(SOBJ) install: all cp $(SOBJ) ../assets

Then run 'make', and 'make install'. Build your Android project, and it will now have libHelloNativeAndroid.so bundled in the .apk file, inside the 'assets' directory (you can easily view the contents of it with unzip -l, because it's just a zip file).

Then install the .apk file using the android debug bridge (adb), with 'adb install HelloNativeAndroid.apk'.

Open the application up on your mobile, or in the emulator, and you should see something resembling the screenshot below.

Some notes:

- My target was a Neo FreeRunner from OpenMoko, hence the -march=armv4t -mtune=arm920t . Feel free to change that to something like -march=armv5te as appropriate

- In the Makefile, adjust the path to your JDK as appropriate

- The rpath argument is necessary so that the native Android linker will know to link the shared object to /system/lib/libc.so, which is from the Bionic C library

- The -nostdlib option is required so that the shared library does not require libc.so.6, which would actually refer to glibc

- The shared library will be extracted to /sdcard/libHelloNativeAndroid.so, so you'll need that folder to exist and be writable on your device

Acknowlegements:

- http://android.wooyd.org/

- http://honeypod.blogspot.com/2007/12/shared-library-hello-world-for-android.html

20090626

Mac OS X on a Dual-Core ARM-Powered Netbook?

With all of the hype over ARM-powered netbooks recently, they seem to be here to stay. Basically all of the major companies are jumping on this opportunity; we have the ARM licensees such as TI, Marvell, Qualcomm, and FreeScale, as well as major operating system providers such as Google (i.e. Android), and Microsoft. Even third-party software vendors like Adobe (i.e. Flash) are jumping aboard.

The ideal silicon solution is a system-on-a-chip (SoC) maybe accompanied by an external graphics co-processor (if it's not already integrated). For ARM architectures, that basically means 1 or 2 chips to power the entire computer versus Intel's 3, 4, 5, etc. Peripheral devices aside, ARM architectures use an order of magnitude less power than equivalent Intel architectures. What that means, is that the computers we use for our daily tasks, including document / internet activities, multimedia, programming, numerical analysis, etc, will have no fan, no heat-sink, and enough battery power for 10 times the active computing or standby time as Intel-based devices.

Now, I came across an article that I think actually has some merit. Apple could potentially become the next big-time ARM licensee and chip fabricator! Considering their recent acquisition of P.A. Semi and their huge successes with the iPod and iPhone (both ARM devices), they would technically stand to save millions by designing and fabricating their ARM chips in-house rather than purchasing them from outside vendors. Now in terms of porting the OS X to an ARM device - piece of cake. The core of the Apple operating system was designed from the start with portability and inheritance in mind. Their software is already pre-built and packaged in a universal binary format. With all likelihood, all they would need to do is highlight a check-box in XCode to build OS X for the ARM.

To elaborate - I'm a really big fan of the TouchBook from Always Innovating, Inc, especially with the detachable keyboard, and touchscreen / tablet form factor. If Apple could do the same, with a unibody aluminum case, have nice illuminated keys, and throw in that always-on 3G HSDPA modem that Qualcomm has in their Snapdragon, then I would be 100% in. In that case, I would very likely be willing to cough up another hundred for what I see as the ideal netbook. Of course, this could draw the power ratio to something like 1/7 instead of 1/10 when compared to an Intel netbook, but that still makes a very, very big difference.

On a final note, in 2010 consumers will see the dawn of when ARM chips actually incorporate two applications processors (aside from radio, DSP, Graphics, etc), much like the 'Core Duo' from Intel. Apple could incorporate two ARM cores, in order to retain that same UI responsiveness that Apple has been so well known for in the past.

Below is a block diagram [via Engadget] of the new ARM Cortex A9 chips that will begin to appear in 2010. The Cortex line of ARM processors was a step in a slightly different direction, being the first ARM devices to support out-of-order execution (OoOE), and although ARM chips have supported single-instruction-multiple-data (SIMD) since the v6 instruction set was introduced, OoOE will boost ARM performance to a level closer to Intel processors, which have traditionally used both OoOE and SIMD (as MMX). OoOE represents instruction-level parallelism while SIMD represents data-level parellelism. It should be noted that both of the aforementioned optimizations are independent of each other as well as independent of instruction pipelining. However, having pipelining, OoOE, and SIMD on the same chip leads to a exponentially increased complexity, resulting in a very large and necessary amount of silicon to reduce data collisions. This is the primary reason that Intel chips have been had such a long history of being power-hungry. Hopefully, this won't have too large of an effect on the power efficiency of future ARM chips.

The ideal silicon solution is a system-on-a-chip (SoC) maybe accompanied by an external graphics co-processor (if it's not already integrated). For ARM architectures, that basically means 1 or 2 chips to power the entire computer versus Intel's 3, 4, 5, etc. Peripheral devices aside, ARM architectures use an order of magnitude less power than equivalent Intel architectures. What that means, is that the computers we use for our daily tasks, including document / internet activities, multimedia, programming, numerical analysis, etc, will have no fan, no heat-sink, and enough battery power for 10 times the active computing or standby time as Intel-based devices.

Now, I came across an article that I think actually has some merit. Apple could potentially become the next big-time ARM licensee and chip fabricator! Considering their recent acquisition of P.A. Semi and their huge successes with the iPod and iPhone (both ARM devices), they would technically stand to save millions by designing and fabricating their ARM chips in-house rather than purchasing them from outside vendors. Now in terms of porting the OS X to an ARM device - piece of cake. The core of the Apple operating system was designed from the start with portability and inheritance in mind. Their software is already pre-built and packaged in a universal binary format. With all likelihood, all they would need to do is highlight a check-box in XCode to build OS X for the ARM.

Honestly, I've never owned any apple products other than a second-hand 4th generation iPod that I lost on my last flight, mainly due to the prices, but if Apple decides to make a competitive move in the netbook market, then such a device might be the first Apple computer that I would buy, if the price is right.

To elaborate - I'm a really big fan of the TouchBook from Always Innovating, Inc, especially with the detachable keyboard, and touchscreen / tablet form factor. If Apple could do the same, with a unibody aluminum case, have nice illuminated keys, and throw in that always-on 3G HSDPA modem that Qualcomm has in their Snapdragon, then I would be 100% in. In that case, I would very likely be willing to cough up another hundred for what I see as the ideal netbook. Of course, this could draw the power ratio to something like 1/7 instead of 1/10 when compared to an Intel netbook, but that still makes a very, very big difference.

On a final note, in 2010 consumers will see the dawn of when ARM chips actually incorporate two applications processors (aside from radio, DSP, Graphics, etc), much like the 'Core Duo' from Intel. Apple could incorporate two ARM cores, in order to retain that same UI responsiveness that Apple has been so well known for in the past.

Below is a block diagram [via Engadget] of the new ARM Cortex A9 chips that will begin to appear in 2010. The Cortex line of ARM processors was a step in a slightly different direction, being the first ARM devices to support out-of-order execution (OoOE), and although ARM chips have supported single-instruction-multiple-data (SIMD) since the v6 instruction set was introduced, OoOE will boost ARM performance to a level closer to Intel processors, which have traditionally used both OoOE and SIMD (as MMX). OoOE represents instruction-level parallelism while SIMD represents data-level parellelism. It should be noted that both of the aforementioned optimizations are independent of each other as well as independent of instruction pipelining. However, having pipelining, OoOE, and SIMD on the same chip leads to a exponentially increased complexity, resulting in a very large and necessary amount of silicon to reduce data collisions. This is the primary reason that Intel chips have been had such a long history of being power-hungry. Hopefully, this won't have too large of an effect on the power efficiency of future ARM chips.

20090624

Samsung i7500 Galaxy from O2 Germany

I thought I would post a link I found to a PDF on O2's website that states what the price of the Samsung i7500 with Android. The flyer says that it will cost 367€ without a contract.

Check it out here. Some updates are on the O2Online.de blog, saying that it should be available either this week or next.

I hope I won't be out of luck if I ask for it with an english UI :)

Update: Nooooooo.... I was just checking out a youtube video preview of the i7500 and discovered a horrible design decision on the part of Samsung. In spite of having designed the device with a 3.5mm audio jack (thank you Samsung!) they used one of those super annoying, hard-to-find, flat USB jacks.

The flat USB jacks are horrible and tend to break quite easily. It's also not saving any space as there is a huge 3.5mm jack directly beside it - the whole point of the flat USB connectors is that they're supposed to be used when thickness is an issue.

Read this, and just know that I'm shaking my head 'no' right now in disappointment.

Incidentally, has anyone found out of there is a regular Mini USB connector with the same pinouts as the flat type? I wouldn't mind just soldering out the old one and putting in a standard Mini USB jack.

Check it out here. Some updates are on the O2Online.de blog, saying that it should be available either this week or next.

I hope I won't be out of luck if I ask for it with an english UI :)

Update: Nooooooo.... I was just checking out a youtube video preview of the i7500 and discovered a horrible design decision on the part of Samsung. In spite of having designed the device with a 3.5mm audio jack (thank you Samsung!) they used one of those super annoying, hard-to-find, flat USB jacks.

The flat USB jacks are horrible and tend to break quite easily. It's also not saving any space as there is a huge 3.5mm jack directly beside it - the whole point of the flat USB connectors is that they're supposed to be used when thickness is an issue.

Read this, and just know that I'm shaking my head 'no' right now in disappointment.

Incidentally, has anyone found out of there is a regular Mini USB connector with the same pinouts as the flat type? I wouldn't mind just soldering out the old one and putting in a standard Mini USB jack.

20090619

NVidia Prefers WinCE to Android

There have been hordes of ARM-powered netbooks that have been popping out of the woodworks of Computex this year. One of which was touting the new NVidia ARM Tegra chip.

Here's a link to an article on Slashdot which reports that NVidia is not ready to back Android as a capable platform for Netbooks.

I unfortunately say that I must agree - although its not completely Google, or the Open Handset Alliance, or Linux that is truly at fault - or NVidia for that matter.

The problem is that NVidia would need to expose yet another kernel and user-space ABI (for their latest, integrated Tegra GPU no-less), and they are not prepared to do so. Aside from that, Android performs much of the hardware acceleration (a.k.a. DSP algorithms) for graphic and audio in a completely re-done set of non-portable libraries (the last time I saw the code), rather than using a single, portable abstraction layer such as OpenGL.

My recommendation? End-users should stick with WinCE (as well as the NVidia-modified UI) that will ship with the NVidia-based netbooks - IF THEY CHOOSE TO BUY A NETBOOK THAT USES AN NVIDIA TEGRA CHIP. Certainly, there are many other, more mature ARM chip vendors that will be offering netbook platforms (e.g. Qualcomm, TI, etc).

However, for almost all other ARM cores with unencumbered ABI's and API's for hardware acceleration - by all means use Android! The Android community, which is composed of literally thousands of developers, will have an exponentially greater ramp-up on new technology and software integration speed than NVidia & MS will, as single entities. Plus, as we have seen in the past, the release cycle for Android will likely be more frequent and since it's Open Source. Furthermore, there is less likelihood that Android will fall behind and become unmaintained (which was the whole purpose of the OHA in the first place), while the NVidia & WinCE combination will likely become unsupported and outdated at some point.

Here's a link to an article on Slashdot which reports that NVidia is not ready to back Android as a capable platform for Netbooks.

I unfortunately say that I must agree - although its not completely Google, or the Open Handset Alliance, or Linux that is truly at fault - or NVidia for that matter.

The problem is that NVidia would need to expose yet another kernel and user-space ABI (for their latest, integrated Tegra GPU no-less), and they are not prepared to do so. Aside from that, Android performs much of the hardware acceleration (a.k.a. DSP algorithms) for graphic and audio in a completely re-done set of non-portable libraries (the last time I saw the code), rather than using a single, portable abstraction layer such as OpenGL.

My recommendation? End-users should stick with WinCE (as well as the NVidia-modified UI) that will ship with the NVidia-based netbooks - IF THEY CHOOSE TO BUY A NETBOOK THAT USES AN NVIDIA TEGRA CHIP. Certainly, there are many other, more mature ARM chip vendors that will be offering netbook platforms (e.g. Qualcomm, TI, etc).

However, for almost all other ARM cores with unencumbered ABI's and API's for hardware acceleration - by all means use Android! The Android community, which is composed of literally thousands of developers, will have an exponentially greater ramp-up on new technology and software integration speed than NVidia & MS will, as single entities. Plus, as we have seen in the past, the release cycle for Android will likely be more frequent and since it's Open Source. Furthermore, there is less likelihood that Android will fall behind and become unmaintained (which was the whole purpose of the OHA in the first place), while the NVidia & WinCE combination will likely become unsupported and outdated at some point.

Labels:

android,

arm,

embedded,

google,

linux,

netbook,

nvidia,

open handset alliance,

open source,

wince

20090611

Canada Rejects Business Method Patents

Here's a link to an interesting article by Michael Geist [via Slashdot].

I completely agree with the ruling, and am very glad that we still don't patent software in Canada.

I completely agree with the ruling, and am very glad that we still don't patent software in Canada.

20090608

More From Computex. The Green Revolution of Computing Begins

One word: Wow!

One word: Wow! For basically as long as I can remember, I've been interested in low-power computing. The combination of environmental awareness, plus the ability to understand how things work (i.e. engineering), essentially equates to my urge to want to make the universe more energy efficient. This is the reason why I've been such a big fan of ARM cores for so many years, and also why I've been following the progress of ARM and Linux together. Linux is basically the only publicly-available, fully-featured OS for alternative architectures such as ARM(ok, BSD too), which is why I consider Linux as pivotal for the recent achievements of ARM and their licensees. Having seen the Qualcomm-powered Netbook only a few days ago, I am no longer hesitant to say, that

For basically as long as I can remember, I've been interested in low-power computing. The combination of environmental awareness, plus the ability to understand how things work (i.e. engineering), essentially equates to my urge to want to make the universe more energy efficient. This is the reason why I've been such a big fan of ARM cores for so many years, and also why I've been following the progress of ARM and Linux together. Linux is basically the only publicly-available, fully-featured OS for alternative architectures such as ARM(ok, BSD too), which is why I consider Linux as pivotal for the recent achievements of ARM and their licensees. Having seen the Qualcomm-powered Netbook only a few days ago, I am no longer hesitant to say, thatLinux has enabled ARM to potentially overcome Intel in the PC market

Slashdot posted this yesterday, and I see no shame in admitting I read it there first. Computex has yet again unveiled what I consider to be the future of mobile computing and traditional workstations. Take a few minutes and please watch these videos. What you'll see is that several well-known semiconductor companies (Freescale, Nvidia, Qualcomm, ... Samsung?) plus many new and established manufacturers, have decided that it is time to bring ARM devices to the desktop.

As many engineers are already aware, ARM chips generally use < 1W of power, and entire systems often consume < 5W. These ARM chips run in the GHz range and offer more than enough computational power for day-to-day tasks, multimedia applications, and now even 3D gaming. When we compare the power consumption of about 5 W for a power-hungry ARM system, with the power consumption of a 300W office computer, the greener choice is clear.

A typical Intel-powered laptop or workstation (see x86 architecture) can often consume power in the range of 250-400W, when hard disks, optical drives, and expansion cards are taken into consideration. The most power-efficient x86-systems can run at about 30W. Therefore, the power savings using an ARM-based system can be between 100 times (in the most optimistic case) and 10 times (in the most conservative case) - but always in the favour of ARM systems.

Although the economy is on everyone's minds these days, energy is probably going to be one of the hottest markets in the next decade (and arguably now). I predict that we are likely to see the Green Revolution of Computing within the next few years. Power-efficient chips, such as those based on ARM, MIPS, and PowerPC architectures, will likely start replacing Intel chips on a massive scale. It's likely that this will first happen in the home, and then it will make its way into businesses. Businesses typically rely on 'legacy' applications and the cost is just to high to redesign most programs right away, so we will likely see the business adoption of Green Computing later on, hopefully driven by some kind of government incentive program.

ARM chips are typically designed for a single-user-load, and aren't necessarily the best thing for professional video editing, high-demand servers, extreme gaming, or scientific computing - at least not yet anyway. Of course, adding optical or magnetic-disk drives to any ARM system would increase the average power consumption of the device, but not as much as it would in the case of a PC, as most of required components for ARM are integrated onto a single chip, and not contained in a different chipset altogether. But isn't physical media becoming almost irrelevant these days, with the increased tendency toward cloud computing?

20090602

Qualcomm SnapDragon Powered EEE PC Surfaces at Computex

Computex 2009 was host today to the newest member of the EEE PC family, powered by the Qualcomm SnapDragon system-on-a-chip and Google's Android OS for mobile devices. The Qualcomm Snapdragon SoC incoroporates an ARM11 core operating ag 1 GHz (future models will be running at 1.3 GHz), an integrated 600 MHz DSP (a coprocessor for math, audio, and 3D acceleration), as well as embedded hardware video codecs, enabling this processor to support HD video of 720p (later models with 1080p). This netbook also offers always-up connectivity with an integrated internationally-compatible 3G modem, as well as the standard 802.11g wireless connectivity. The full spec list is available here. However, the kicker in favour of ARM-based netbooks is their power efficiency. The Qualcomm-powered EEE PC is expected to boast a battery life of 8 to 10 hours on a single charge!

ARM based processors are what power 99% of today's cellular phones and mobile electronics, such as the iPhone. As one might expect, there is no room for a heat sink or fan in a mobile phone. Therefore, ARM engineers and their licensees have taken power efficiency to a whole new level with this technology. Aside from the impressive multimedia and gaming capabilities, and like most ARM-based SoCs, all of the transistor logic for the CPU, co-processor, and peripheral devices is integrated onto a single wafer of silicon, hence the term 'system-on-a-chip'. Its as if the video, sound, network, PCIe controller, memory controllers, etc, etc, etc, were crammed onto a single package. For comparison, a similar netbook platform, based on the Intel architecture, would occupy at least three, but more commonly four or more chips, resulting in a drastically larger amount of power dissipated as heat.

The 1GHz barrier for ARM chips was first traversed commercially by Marvell, with their Shiva-Plug. Texas Instruments had reached milestones even earlier with their OMAP line of chips which power devices such as the BeagleBoard development board and the OpenPandora mobile gaming platform. The Qualcomm-powered EEE PC is not the first ARM-powered netbook though; some people may still have their eyes on the Always Innovating TouchBook, which is also powered by the familiar OMAP processor. However, if ASUS blesses this marriage of ARM and Android in their cathedrals of manufacturing, then the new Qualcomm-powered ASUS EEE PC might be the first ARM-powered netbook that hits the mass market.

ARM based processors are what power 99% of today's cellular phones and mobile electronics, such as the iPhone. As one might expect, there is no room for a heat sink or fan in a mobile phone. Therefore, ARM engineers and their licensees have taken power efficiency to a whole new level with this technology. Aside from the impressive multimedia and gaming capabilities, and like most ARM-based SoCs, all of the transistor logic for the CPU, co-processor, and peripheral devices is integrated onto a single wafer of silicon, hence the term 'system-on-a-chip'. Its as if the video, sound, network, PCIe controller, memory controllers, etc, etc, etc, were crammed onto a single package. For comparison, a similar netbook platform, based on the Intel architecture, would occupy at least three, but more commonly four or more chips, resulting in a drastically larger amount of power dissipated as heat.

The 1GHz barrier for ARM chips was first traversed commercially by Marvell, with their Shiva-Plug. Texas Instruments had reached milestones even earlier with their OMAP line of chips which power devices such as the BeagleBoard development board and the OpenPandora mobile gaming platform. The Qualcomm-powered EEE PC is not the first ARM-powered netbook though; some people may still have their eyes on the Always Innovating TouchBook, which is also powered by the familiar OMAP processor. However, if ASUS blesses this marriage of ARM and Android in their cathedrals of manufacturing, then the new Qualcomm-powered ASUS EEE PC might be the first ARM-powered netbook that hits the mass market.

20090529

Why Windows Suffers From Bloat

Yesterday, I was trying to install a popular pocketpc weather application on my 'smart' mobile phone, which runs Windows CE 5.0. Linux is almost running on it without problems, so hopefully I won't be using WinCE much longer, but rather Android. In any event, the application required something in the range of 5 to 10 megabytes of program storage space, which was not available on my device because most of the space was already filled with other proprietary software. Nevermind the fact that my device has 128 MB for program storage and 64 MB of RAM, which is more than enough in my humble opinion, but 5 to 10 megabytes for a simple program to read the weather? I would say that is just slightly excessive.

Let me explain a little bit about resource utilization in computer systems. I feel that I can make the generalization to 'computers' here because a mobile device is basically a small computer anyway, with relatively less storage and working memory. Being a long-time member of the Linux ecosystem, I feel that I have been accustomed to (spoiled by?) the always-comfortable feeling that my permanent storage as well as working memory are 'very large' when compared with the amount I actually need to run any application. I never have to worry about running out of hard-disk space when I install a new program, nor do I have to worry about running out of RAM or experiencing large-scale system-slow-down if I have many programs running simultaneously. Both aforementioned problems plague most windows users who I know.

Why does that happen? The answer is technically a bit involved, but it can be explained with a very simple analogy; Open Source Software (OSS) shares code and closed source software (CSS) doesn't.

OSS developers are free to use, modify, and redistribute source (and binary) code. One of the benefits of this philosophy, is that several applications (however unrelated they seem) can share the same code for common tasks. For example, a media player would need code (some algorithm) to sort and list all of your favourite tracks in alphabetical order. Similarly, a spreadsheet application would need similar code to sort a list of names in alphabetical order. In the OSS world, both of these applications have the potential to use the same code to sort a list alphabetically (as a general example). The developer of the media player, the developer of the spreadsheet, as well as the developer of the alphabetical sort code are all able to help each other and improve a the alphabetical sort algorithm. They exist-in and contribute-to a common ecosystem where everyone benefits.

On the other hand, in the Windows world, similar programs developed by different companies are in a state of economic competition. For example, two different tax programs compete for customers, and (usually) the 'better' product wins. However, the problem even exists between companies that develop completely different applications for the simple reason that many programs use require the same generic algorithms for sorting lists, etc. Therefore, the closed-source software (CSS) ecosystem breeds an inherent distrust between its members, for fear that a competitor might 'steal' the algorithm and thus the potential revenue which that algorithm could generate.

Ok, fine, but how does this relate to computer memory and storage space?

In the Windows world, every program (each written by a different company) would naturally have a secret place where they store their code for alphabetical sorting.When the media player program is installed on your windows computer, there is a special file, or library, that stores the sorting algorithm. For every program that uses similar code, the storage space is duplicated, and we're only considering an algorithm to sort names alphabetically! When one considers the many thousands of algorithms that are stored, the storage utilization starts to look very inefficient. Even worse - its not just the storage (hard disk) space that's affected, but also the working memory (RAM) of the computer!!

In the Open Source Software world, this code resides in one place for the whole world to use and modify. Similarly, the code only needs to be installed in one place to a Linux computer, in a single file for any program that requires an alphabetical sorting algorithm. This is essentially the same thing that happens while the program is running in memory; regardless of the number of programs that reference the code, it only exists once in RAM (context is saved elsewhere). The same algorithm (code) requires a fraction of the working memory in a Linux computer as it does in a Windows computer, for the same number of programs. Sharing is good !!

The benefit of using dynamically-linked libraries (shared objects) vs. statically linked libraries, is old news for most of the world, including Microsoft. Developers benefit from code reuse, common bug / fix propogation, and of course reduced memory usage, among many other things. Ironically, Windows has supported DLL's for a very long time. However, in spite of the many benefits, most 3rd party commercial application developers will likely continue to use their own stacks instead of a communal one, so that their 'intellectual property' is not sacrificed. Microsoft has partially rectified this problem with the introduction of .NET, C#, and managed code, but there are still plenty of legacy applications out there using VC++, MFC, and the Win32 API that will never be migrated.

I would assume that increased code sharing is at least linearly proportional (in some useful range) to resource utilization efficiency. Dynamic sharing is much more predominant in an Open Source Software environment. Therefore Open Source Software environments exhibit dramatically more efficient resource utilization.

Let me explain a little bit about resource utilization in computer systems. I feel that I can make the generalization to 'computers' here because a mobile device is basically a small computer anyway, with relatively less storage and working memory. Being a long-time member of the Linux ecosystem, I feel that I have been accustomed to (spoiled by?) the always-comfortable feeling that my permanent storage as well as working memory are 'very large' when compared with the amount I actually need to run any application. I never have to worry about running out of hard-disk space when I install a new program, nor do I have to worry about running out of RAM or experiencing large-scale system-slow-down if I have many programs running simultaneously. Both aforementioned problems plague most windows users who I know.

Why does that happen? The answer is technically a bit involved, but it can be explained with a very simple analogy; Open Source Software (OSS) shares code and closed source software (CSS) doesn't.

OSS developers are free to use, modify, and redistribute source (and binary) code. One of the benefits of this philosophy, is that several applications (however unrelated they seem) can share the same code for common tasks. For example, a media player would need code (some algorithm) to sort and list all of your favourite tracks in alphabetical order. Similarly, a spreadsheet application would need similar code to sort a list of names in alphabetical order. In the OSS world, both of these applications have the potential to use the same code to sort a list alphabetically (as a general example). The developer of the media player, the developer of the spreadsheet, as well as the developer of the alphabetical sort code are all able to help each other and improve a the alphabetical sort algorithm. They exist-in and contribute-to a common ecosystem where everyone benefits.

On the other hand, in the Windows world, similar programs developed by different companies are in a state of economic competition. For example, two different tax programs compete for customers, and (usually) the 'better' product wins. However, the problem even exists between companies that develop completely different applications for the simple reason that many programs use require the same generic algorithms for sorting lists, etc. Therefore, the closed-source software (CSS) ecosystem breeds an inherent distrust between its members, for fear that a competitor might 'steal' the algorithm and thus the potential revenue which that algorithm could generate.

Ok, fine, but how does this relate to computer memory and storage space?

In the Windows world, every program (each written by a different company) would naturally have a secret place where they store their code for alphabetical sorting.When the media player program is installed on your windows computer, there is a special file, or library, that stores the sorting algorithm. For every program that uses similar code, the storage space is duplicated, and we're only considering an algorithm to sort names alphabetically! When one considers the many thousands of algorithms that are stored, the storage utilization starts to look very inefficient. Even worse - its not just the storage (hard disk) space that's affected, but also the working memory (RAM) of the computer!!

In the Open Source Software world, this code resides in one place for the whole world to use and modify. Similarly, the code only needs to be installed in one place to a Linux computer, in a single file for any program that requires an alphabetical sorting algorithm. This is essentially the same thing that happens while the program is running in memory; regardless of the number of programs that reference the code, it only exists once in RAM (context is saved elsewhere). The same algorithm (code) requires a fraction of the working memory in a Linux computer as it does in a Windows computer, for the same number of programs. Sharing is good !!

The benefit of using dynamically-linked libraries (shared objects) vs. statically linked libraries, is old news for most of the world, including Microsoft. Developers benefit from code reuse, common bug / fix propogation, and of course reduced memory usage, among many other things. Ironically, Windows has supported DLL's for a very long time. However, in spite of the many benefits, most 3rd party commercial application developers will likely continue to use their own stacks instead of a communal one, so that their 'intellectual property' is not sacrificed. Microsoft has partially rectified this problem with the introduction of .NET, C#, and managed code, but there are still plenty of legacy applications out there using VC++, MFC, and the Win32 API that will never be migrated.

I would assume that increased code sharing is at least linearly proportional (in some useful range) to resource utilization efficiency. Dynamic sharing is much more predominant in an Open Source Software environment. Therefore Open Source Software environments exhibit dramatically more efficient resource utilization.

20090414

Linux Foundation Video Contest ... Winner?

Well, the Linux Foundation has released their winning video today...

Do I not sound impressed? Yeaaaa.....

I'm actually not that impressed. I have to admit, that I found the winning video to be very dull. It overly simplifies Linux... actually... does it even mention Linux?? It doesn't highlight how Linux brings people together from all walks of life. It doesn't say how Linux runs on verything from toasters to supercomputers or that it runs on more devices than any other OS in the world.

Please also forgive me for saying this, but ... in the winning video, the narrator's voice silently screams "people who use Linux are geeks, and they can barely speak, much less maintain a complex body of software through social interaction!!" Man, the guy's voice reminds me of the one giving the Grails webinar... ugghh... and I don't have very fond memories about Grails.

I thought that some of the finalists were a little too light-hearted. Some of them were slightly spooky, but I would have thought that a quirky, funny, yet clever video would have snuck through to the end. Alas, I was wrong.

My 5 favourites were this, this, this, this, and this, in no specific order, if only because they captivated or identified with the audience somehow. I would have even preferred the Novell Meet Linux ads over the winner of the contest (Flame suit on!). I would have even preferred the TrueNuff spoof advertisements over the winner, in spite of not being pro-linux whatsoever.

They would have been better off showing a clip of somebody playing NumptyPhysics.

Do I not sound impressed? Yeaaaa.....

I'm actually not that impressed. I have to admit, that I found the winning video to be very dull. It overly simplifies Linux... actually... does it even mention Linux?? It doesn't highlight how Linux brings people together from all walks of life. It doesn't say how Linux runs on verything from toasters to supercomputers or that it runs on more devices than any other OS in the world.

Please also forgive me for saying this, but ... in the winning video, the narrator's voice silently screams "people who use Linux are geeks, and they can barely speak, much less maintain a complex body of software through social interaction!!" Man, the guy's voice reminds me of the one giving the Grails webinar... ugghh... and I don't have very fond memories about Grails.

I thought that some of the finalists were a little too light-hearted. Some of them were slightly spooky, but I would have thought that a quirky, funny, yet clever video would have snuck through to the end. Alas, I was wrong.

My 5 favourites were this, this, this, this, and this, in no specific order, if only because they captivated or identified with the audience somehow. I would have even preferred the Novell Meet Linux ads over the winner of the contest (Flame suit on!). I would have even preferred the TrueNuff spoof advertisements over the winner, in spite of not being pro-linux whatsoever.

They would have been better off showing a clip of somebody playing NumptyPhysics.

20090411

Re-branding Engineering

Recently there was an comment / article in the EE Times about re-branding the engineering profession as one that is rewarding on many different levels. I wanted to share my reaction to the article, for anyone who might grace these pages with their eyes.

I would definitely agree with Cohn's perspective. He is the IBM fellow who spoke out about his love for the profession, and how he would do it for free. I myself feel the same. John Cohn spends a lot of his time trying to re-brand the engineering profession, and make it exciting for youths. On the other hand, there is a lot of truth to the follow-up saying that the job market for people like us is getting smaller and smaller in North America and Western Europe.

People who don't understand engineering practise, see it as just another expense to get what they want in the end. Yes, that sentiment is directed at the majority of management. So why would any business entity, in their right mind, spend more money to have engineering done in the western world, when they can get the same product from the eastern world at a fraction of the price? From an engineer's perspective, meaning we think sometimes too much about process and financial efficiency, our natural answer to that question, would be 'none'. Maybe the engineering curriculum should include a course about the societal responsibility towards engineering.

Our social system, in North America at least, is starting to become tarnished. We have some of the best technical universities in the world. However, once our engineering graduates step out of the gates, they find themselves in one of the worst job market declines in history. I find it somehow disturbing, that a high-school dropout working on an automotive assembly line can have more in wages, benefits, and job security than a lot of engineers that I know.